A flood of new material about child sexual abuse created by artificial intelligence is overwhelming authorities already kept in check by outdated technology and laws, according to a new report released Monday by Stanford University's Internet watchdog. It is said that there is a risk of it happening.

Over the past year, new AI technology has made it easier for criminals to create explicit images of children. Now, Stanford researchers say the National Center for Missing and Exploited Children, a nonprofit organization that serves as a central coordinating agency and receives most of its funding from the federal government, has resources to combat the growing threat. It warns that there is no.

Founded in 1998, the organization's CyberTipline is a federal clearinghouse that processes all reports of child sexual abuse (CSAM) online and is used by law enforcement agencies in criminal investigations. However, many of the tips we receive are incomplete or have many inaccuracies. The small staff also struggles to keep up with the volume.

“In the coming years, CyberTipline will almost certainly be flooded with hyper-realistic AI content, making it even more difficult for law enforcement to identify the real children they need to rescue. ,” said Shelby Grossman, one of the researchers. Author of the report.

The National Center on Missing and Exploited Children is on the front lines of a new fight against AI-generated sexually exploitative images. AI is an emerging field of crime that has yet to be defined by lawmakers and law enforcement. Already, deepfake AI-generated nude images are circulating in schools, and some lawmakers are taking action to ensure such content is considered illegal.

Researchers say AI-generated CSAM images are illegal if they contain real children or if images of real children are used to train the data. However, one of the report's authors said synthetically created images that do not include actual images may be protected as free speech.

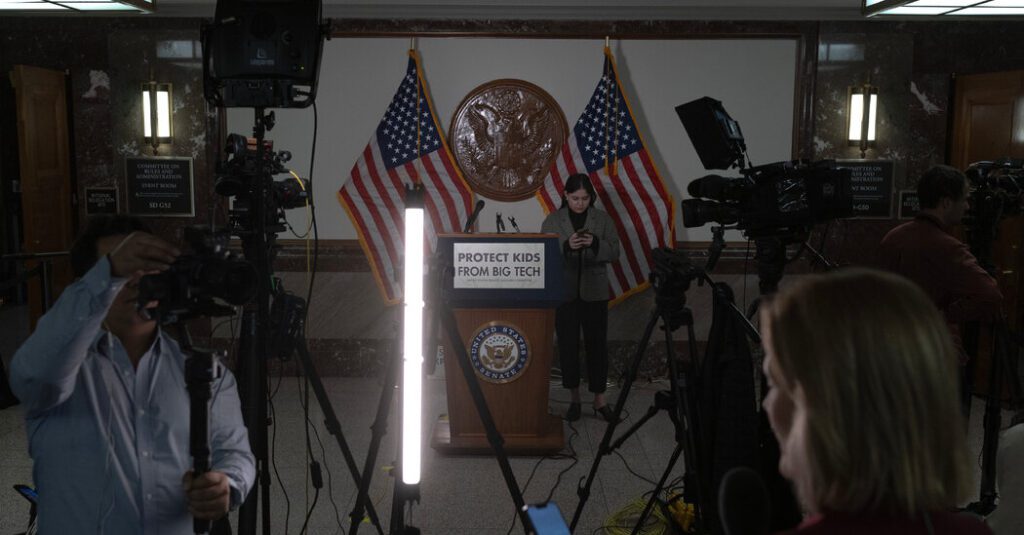

Public outrage over the spread of child sexual abuse images online explodes at a recent hearing between the CEOs of Meta, Snap, TikTok, Discord and X on protecting young children online. Members of Congress criticized the government for not doing enough.

The Center for Missing and Exploited Children, which accepts information from individuals and companies like Facebook and Google, is advocating for legislation to increase funding and give it more access to technology. Stanford University researchers said the organization provided access to interviews with employees and their systems for a report showing vulnerabilities in the systems that needed updating.

“Over the years, the complexity of reporting and the seriousness of crimes against children have continued to evolve,” the group said in a statement. “So by leveraging new technology solutions throughout the CyberTipline process, more children will be protected and criminals will be held accountable.”

Researchers at Stanford University are helping the organization ensure that law enforcement can determine reports involving AI-generated content and ensure that companies reporting potentially abusive content on the platform fully fill out a form. In order to make this possible, the organization found it necessary to change its reporting system.

Less than half of all reports submitted to CyberTipline were “actionable” in 2022. This is because the company reporting the fraud failed to provide enough information, or images of the information spread quickly online, resulting in too many reports. The hint line has an option to check if the content of the hint is a possible meme, but many people don't use it.

On one day earlier this year, a record 1 million reports of child sexual abuse flooded the federal clearinghouse. Investigators have been working for weeks to deal with the unusual spike. Many of the reports were found to be related to meme images that people were sharing across platforms to express anger rather than malice. However, it still consumed considerable investigative resources.

Alex Stamos, one of the authors of the Stanford University report, said this trend will only get worse as AI-generated content accelerates.

“A million identical images is hard enough. If AI creates a million separate images, they'll break down,” Stamos said.

The Center for Missing and Exploited Children and its contractors are restricted from using cloud computing providers and are required to store images locally on their computers. The researchers found that this requirement made it difficult to build and use the specialized hardware used to create and train AI models for research.

The organizations typically do not have the technology needed to widely use facial recognition software to identify victims and offenders. Much of the report processing is still manual.